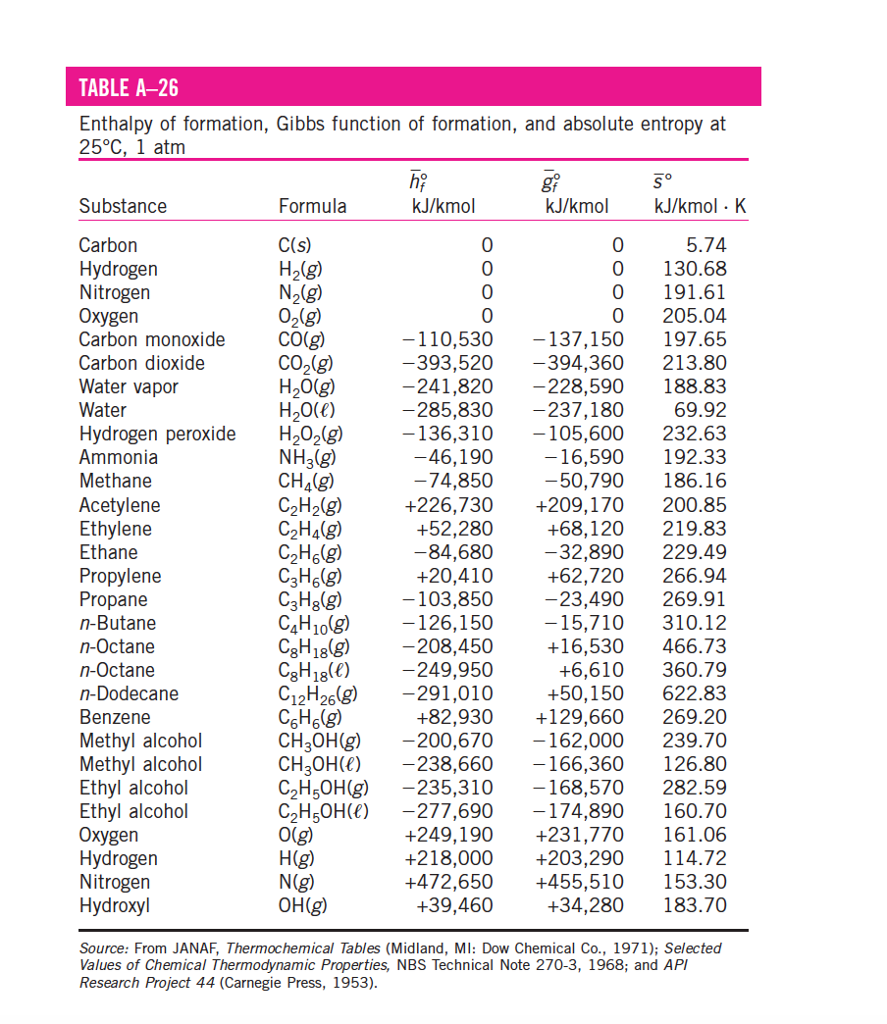

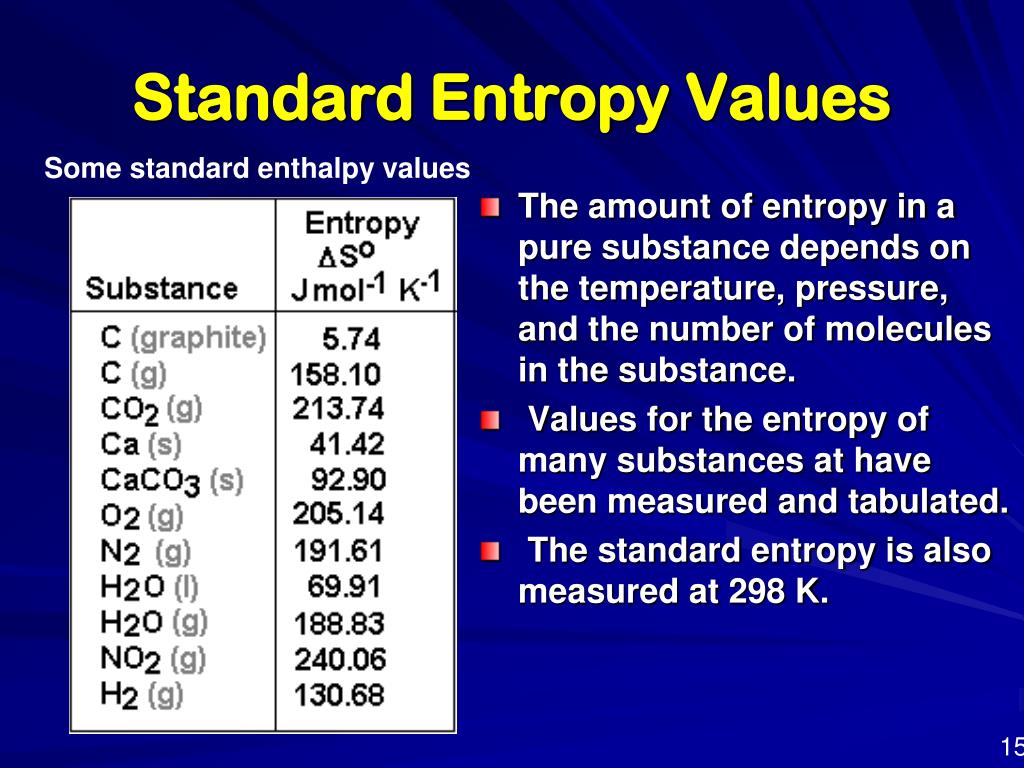

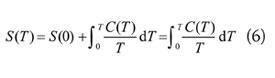

Vorlesungen über Gastheorie, Ludwig Boltzmann (1898) vol. The third law of thermodynamics has two important consequences: it defines the sign of the entropy of any substance at temperatures above absolute zero as positive, and it provides a fixed reference point that allows us to measure the absolute entropy of any substance at any temperature.Vorlesungen über Gastheorie, Ludwig Boltzmann (1896) vol.Ludwig Boltzmann: the Man who Trusted Atoms, Oxford University Press, Oxford UK, ISBN 9780198501541, p. "Über die Mechanische Bedeutung des Zweiten Hauptsatzes der Wärmetheorie". This article incorporates text from this source, which is available under the CC BY 3.0 license. From 89 K to its melting point at 161 K, the heat capacity of solid carbon disulfide increases linearly from 42 J/ (molK) to 57 J. However, the heat capacity of solid carbon disulfide varies greatly with temperature. Translation of Ludwig Boltzmann’s Paper “On the Relationship between the Second Fundamental Theorem of the Mechanical Theory of Heat and Probability Calculations Regarding the Conditions for Thermal Equilibrium” Sitzungberichte der Kaiserlichen Akademie der Wissenschaften. The heat capacity, CP, of liquid carbon disulfide is a relatively constant 78 J/ (molK). ^ Max Planck (1914) The theory of heat radiation equation 164, p.119.6: Thermograms Showing That Heat Is Absorbed from the Surroundings When Ice Melts at 0☌. For example, compare the (So) values for CH 3 OH(l) and CH 3 CH 2 OH(l). Eric Weisstein's World of Physics (states the year was 1872). The amount of heat lost by the surroundings is the same as the amount gained by the ice, so the entropy of the universe does not change. Similarly, the absolute entropy of a substance tends to increase with increasing molecular complexity because the number of available microstates increases with molecular complexity. ^ See: photo of Boltzmann's grave in the Zentralfriedhof, Vienna, with bust and entropy formula.The probability distribution of the system as a whole then factorises into the product of N separate identical terms, one term for each particle and when the summation is taken over each possible state in the 6-dimensional phase space of a single particle (rather than the 6 N-dimensional phase space of the system as a whole), the Gibbs entropy The Boltzmann entropy is obtained if one assumes one can treat all the component particles of a thermodynamic system as statistically independent. This is exact for an ideal gas of identical particles that move independently apart from instantaneous collisions, and is an approximation, possibly a poor one, for other systems. The term Boltzmann entropy is also sometimes used to indicate entropies calculated based on the approximation that the overall probability can be factored into an identical separate term for each particle-i.e., assuming each particle has an identical independent probability distribution, and ignoring interactions and correlations between the particles.

In every situation where equation ( 1) is valid,Įquation ( 3) is valid also-and not vice versa.īoltzmann entropy excludes statistical dependencies

That is, equation ( 1) is a corollary ofĮquation ( 3)-and not vice versa. Gibbs gave an explicitly probabilistic interpretation in 1878.īoltzmann himself used an expression equivalent to ( 3) in his later work and recognized it as more general than equation ( 1). But we can use absolute entropies to calculate S, the entropy change of a. He interpreted ρ as a density in phase space-without mentioning probability-but since this satisfies the axiomatic definition of a probability measure we can retrospectively interpret it as a probability anyway. Measuring absolute entropy is difficult (how do you put a number on disorder.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed